内容

继 Qwen3.6-Plus 发布之后,我们非常高兴地宣布开源 Qwen3.6-35B-A3B —— 一个稀疏但能力出色的混合专家(MoE)模型,总参数量为350亿,激活参数仅30亿。尽管高效轻量,Qwen3.6-35B-A3B 在智能体编程方面表现卓越,大幅超越前代模型 Qwen3.5-35B-A3B,并可与 Qwen3.5-27B 和 Gemma4-31B 等稠密模型一较高下。该模型依然支持多模态思考与非思考模式,是当前最具通用性的开源模型之一。现在,Qwen3.6-35B-A3B 已在 Qwen Studio 上线,可通过 API 调用,并以开源权重的形式向社区发布。

- Qwen3.6-35B-A3B 是一个完全开源的 MoE 模型(总参数 35B / 激活参数 3B),主要特性包括: 卓越的智能体编程能力,可与大得多的模型相媲美

- 强大的多模态感知与推理能力

- 您可以在 Qwen Studio 进行交互对话,

通过 阿里云百炼 以 Qwen3.6-Flash 的名称调用 API (即将到来),

或从 Hugging Face 和 ModelScope 下载模型权重。

模型表现

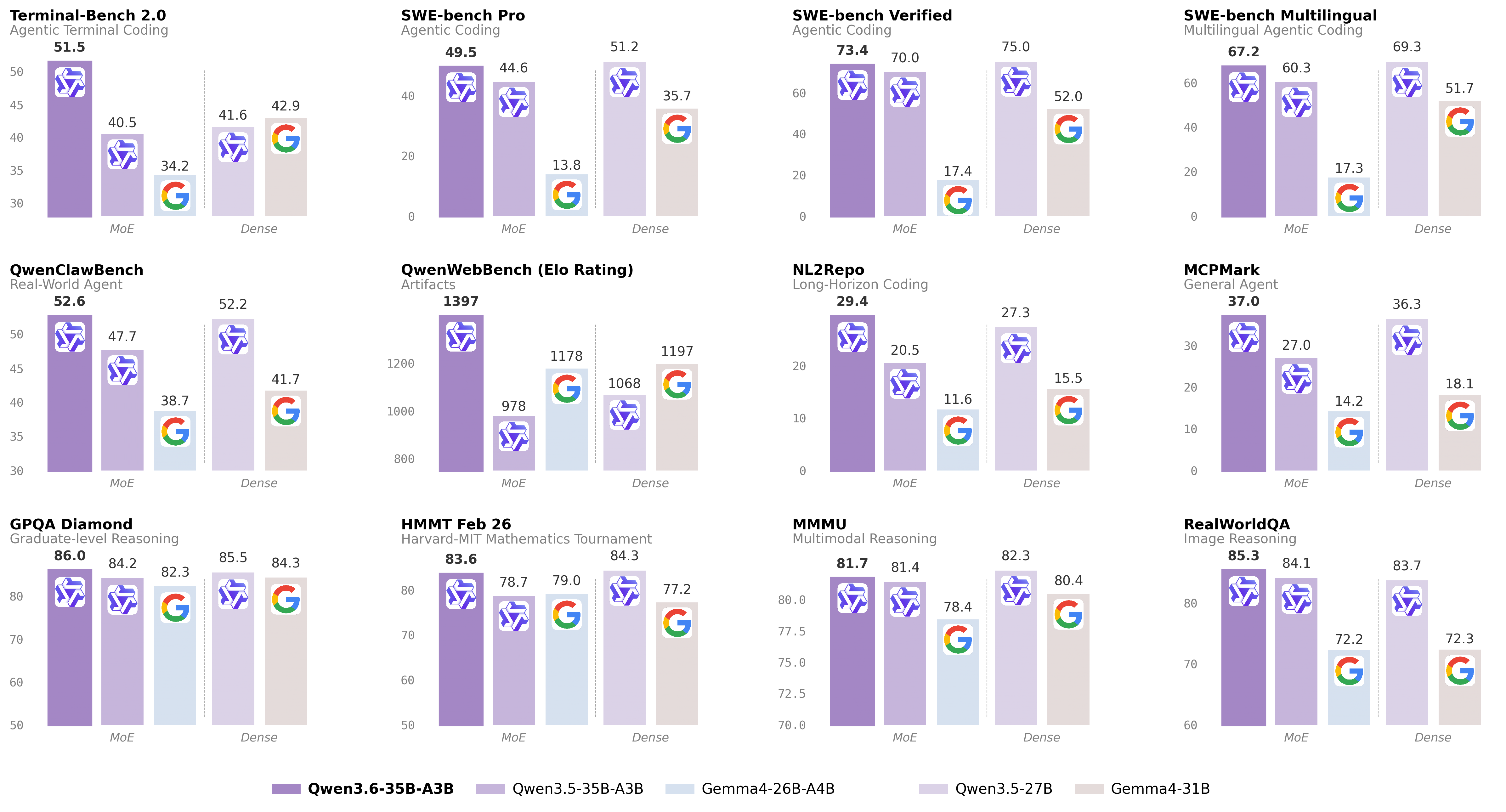

下文将全面展示 Qwen3.6-35B-A3B 与同规模模型在各类任务和模态上的评测对比结果。

自然语言

仅凭30亿激活参数,Qwen3.6-35B-A3B 在多项关键编程基准上超越了270亿参数的稠密模型 Qwen3.5-27B,并在智能体编程和推理任务上大幅超越其直接前代 Qwen3.5-35B-A3B。

| Qwen3.5-27B | Gemma4-31B | Qwen3.5-35BA3B | Gemma4-26BA4B | Qwen3.6-35BA3B | |

|---|---|---|---|---|---|

| Coding Agent | |||||

| SWE-bench Verified | 75.0 | 52.0 | 70.0 | 17.4 | 73.4 |

| SWE-bench Multilingual | 69.3 | 51.7 | 60.3 | 17.3 | 67.2 |

| SWE-bench Pro | 51.2 | 35.7 | 44.6 | 13.8 | 49.5 |

| Terminal-Bench 2.0 | 41.6 | 42.9 | 40.5 | 34.2 | 51.5 |

| Claw-Eval Avg | 64.3 | 48.5 | 65.4 | 58.8 | 68.7 |

| Claw-Eval Pass^3 | 46.2 | 25.0 | 51.0 | 28.0 | 50.0 |

| SkillsBench Avg5 | 27.2 | 23.6 | 4.4 | 12.3 | 28.7 |

| QwenClawBench | 52.2 | 41.7 | 47.7 | 38.7 | 52.6 |

| NL2Repo | 27.3 | 15.5 | 20.5 | 11.6 | 29.4 |

| QwenWebBench | 1068 | 1197 | 978 | 1178 | 1397 |

| General Agent | |||||

| TAU3-Bench | 68.4 | 67.5 | 68.9 | 59.0 | 67.2 |

| VITA-Bench | 41.8 | 43.0 | 29.1 | 36.9 | 35.6 |

| DeepPlanning | 22.6 | 24.0 | 22.8 | 16.2 | 25.9 |

| Tool Decathlon | 31.5 | 21.2 | 28.7 | 12.0 | 26.9 |

| MCPMark | 36.3 | 18.1 | 27.0 | 14.2 | 37.0 |

| MCP-Atlas | 68.4 | 57.2 | 62.4 | 50.0 | 62.8 |

| WideSearch | 66.4 | 35.2 | 59.1 | 38.3 | 60.1 |

| Knowledge | |||||

| MMLU-Pro | 86.1 | 85.2 | 85.3 | 82.6 | 85.2 |

| MMLU-Redux | 93.2 | 93.7 | 93.3 | 92.7 | 93.3 |

| SuperGPQA | 65.6 | 65.7 | 63.4 | 61.4 | 64.7 |

| C-Eval | 90.5 | 82.6 | 90.2 | 82.5 | 90.0 |

| STEM & Reasoning | |||||

| GPQA | 85.5 | 84.3 | 84.2 | 82.3 | 86.0 |

| HLE | 24.3 | 19.5 | 22.4 | 8.7 | 21.4 |

| LiveCodeBench v6 | 80.7 | 80.0 | 74.6 | 77.1 | 80.4 |

| HMMT Feb 25 | 92.0 | 88.7 | 89.0 | 91.7 | 90.7 |

| HMMT Nov 25 | 89.8 | 87.5 | 89.2 | 87.5 | 89.1 |

| HMMT Feb 26 | 84.3 | 77.2 | 78.7 | 79.0 | 83.6 |

| IMOAnswerBench | 79.9 | 74.5 | 76.8 | 74.3 | 78.9 |

| AIME26 | 92.6 | 89.2 | 91.0 | 88.3 | 92.7 |

- SWE-Bench Series: Internal agent scaffold (bash + file-edit tools); temp=1.0, top_p=0.95, 200K context window. We correct some problematic tasks in the public set of SWE-bench Pro and evaluate all baselines on the refined benchmark.

- Terminal-Bench 2.0: Harbor/Terminus-2 harness; 3h timeout, 32 CPU/48 GB RAM; temp=1.0, top_p=0.95, top_k=20, max_tokens=80K, 256K ctx; avg of 5 runs.

- SkillsBench: Evaluated via OpenCode on 78 tasks (self-contained subset, excluding API-dependent tasks); avg of 5 runs.

- NL2Repo: Others are evaluated via Claude Code (temp=1.0, top_p=0.95, max_turns=900).

- QwenClawBench: An internal real-user-distribution Claw agent benchmark (open-sourcing soon); temp=0.6, 256K ctx.

- QwenWebBench: An internal front-end code generation benchmark; bilingual (EN/CN), 7 categories (Web Design, Web Apps, Games, SVG, Data Visualization, Animation, and 3D); auto-render + multimodal judge (code/visual correctness); BT/Elo rating system.

- TAU3-Bench: We use the official user model (gpt-5.2, low reasoning effort) + default BM25 retrieval.

- VITA-Bench: Avg subdomain scores; using claude-4-sonnet as judger, as the official judger (claude-3.7-sonnet) is no longer available.

- MCPMark: GitHub MCP v0.30.3; Playwright responses truncated at 32K tokens.

- MCP-Atlas: Public set score; gemini-2.5-pro judger.

- AIME 26: We use the full AIME 2026 (I & II), where the scores may differ from Qwen 3.5 notes.

视觉语言

Qwen3.6 原生支持多模态,Qwen3.6-35B-A3B 以仅约 30 亿激活参数,展现出远超其体量的感知与多模态推理能力。在大多数视觉语言基准上,它的表现已与 Claude Sonnet 4.5 持平,甚至在部分任务上实现超越。其在空间智能上的优势尤为突出:RefCOCO 92.0、ODInW13 50.8。

| Qwen3.5-27B | Claude-Sonnet-4.5 | Gemma4-31B | Gemma4-26BA4B | Qwen3.5-35B-A3B | Qwen3.6-35B-A3B | |

|---|---|---|---|---|---|---|

| STEM and Puzzle | ||||||

| MMMU | 82.3 | 79.6 | 80.4 | 78.4 | 81.4 | 81.7 |

| MMMU-Pro | 75.0 | 68.4 | 76.9* | 73.8* | 75.1 | 75.3 |

| Mathvista(mini) | 87.8 | 79.8 | 79.3 | 79.4 | 86.2 | 86.4 |

| ZEROBench_sub | 36.2 | 26.3 | 26.0 | 26.3 | 34.1 | 34.4 |

| General VQA | ||||||

| RealWorldQA | 83.7 | 70.3 | 72.3 | 72.2 | 84.1 | 85.3 |

| MMBench EN-DEV-v1.1 | 92.6 | 88.3 | 90.9 | 89.0 | 91.5 | 92.8 |

| SimpleVQA | 56.0 | 57.6 | 52.9 | 52.2 | 58.3 | 58.9 |

| HallusionBench | 70.0 | 59.9 | 67.4 | 66.1 | 67.9 | 69.8 |

| Text Recognition and Document Understanding | ||||||

| OmniDocBench1.5 | 88.9 | 85.8 | 80.1 | 74.4 | 89.3 | 89.9 |

| CharXiv(RQ) | 79.5 | 67.2 | 67.9 | 69.0 | 77.5 | 78.0 |

| CC-OCR | 81.0 | 68.1 | 75.7 | 74.5 | 80.7 | 81.9 |

| AI2D_TEST | 92.9 | 87.0 | 89.0 | 88.3 | 92.6 | 92.7 |

| Spatial Intelligence | ||||||

| RefCOCO(avg) | 90.9 | -- | -- | -- | 89.2 | 92.0 |

| ODInW13 | 41.1 | -- | -- | -- | 42.6 | 50.8 |

| EmbSpatialBench | 84.5 | 71.8 | -- | -- | 83.1 | 84.3 |

| RefSpatialBench | 67.7 | -- | -- | -- | 63.5 | 64.3 |

| Video Understanding | ||||||

| VideoMME (w sub.) | 87.0 | 81.1 | -- | -- | 86.6 | 86.6 |

| VideoMME (w/o sub.) | 82.8 | 75.3 | -- | -- | 82.5 | 82.5 |

| VideoMMMU | 82.3 | 77.6 | 81.6 | 76.0 | 80.4 | 83.7 |

| MLVU | 85.9 | 72.8 | -- | -- | 85.6 | 86.2 |

| MVBench | 74.6 | -- | -- | -- | 74.8 | 74.6 |

| LVBench | 73.6 | -- | -- | -- | 71.4 | 71.4 |

- Empty cells (--) indicate scores not available or not applicable.

开始使用 Qwen3.6-35B-A3B

Qwen3.6-35B-A3B 即将登陆阿里云 Model Studio。我们正在全力筹备中,请耐心等待。

Qwen3.6-35B-A3B 的开源权重已在 Hugging Face 和 ModelScope 上提供,支持本地部署;也可通过 阿里云百炼 API 以 qwen3.6-flash 的名称调用。此外,您还可以在 Qwen Studio 上即时体验。

该模型可以无缝集成到流行的第三方编程助手中,包括 OpenClaw、Claude Code 和 Qwen Code,从而简化开发流程,实现高效且具备上下文感知能力的编码体验。

API 使用方式

本次发布支持 preserve_thinking 功能:在消息中保留所有前序轮次的思维内容,推荐用于智能体任务。

阿里云百炼

阿里云百炼支持行业标准协议,包括兼容 OpenAI 规范的聊天补全(chat completions)和响应(responses)API,以及兼容 Anthropic 的 API 接口。

以下是聊天补全 API 的代码示例:

"""

Environment variables (per official docs):

DASHSCOPE_API_KEY: Your API Key from https://bailian.console.aliyun.com/

DASHSCOPE_BASE_URL: (optional) Base URL for compatible-mode API.

- Beijing: https://dashscope.aliyuncs.com/compatible-mode/v1

- Singapore: https://dashscope-intl.aliyuncs.com/compatible-mode/v1

- US (Virginia): https://dashscope-us.aliyuncs.com/compatible-mode/v1

DASHSCOPE_MODEL: (optional) Model name; override for different models.

"""

from openai import OpenAI

import os

api_key = os.environ.get("DASHSCOPE_API_KEY")

if not api_key:

raise ValueError(

"DASHSCOPE_API_KEY is required. "

"Set it via: export DASHSCOPE_API_KEY='your-api-key'"

)

client = OpenAI(

api_key=api_key,

base_url=os.environ.get(

"DASHSCOPE_BASE_URL",

"https://dashscope.aliyuncs.com/compatible-mode/v1",

),

)

messages = [{"role": "user", "content": "Introduce vibe coding."}]

model = os.environ.get(

"DASHSCOPE_MODEL",

"qwen3.6-flash",

)

completion = client.chat.completions.create(

model=model,

messages=messages,

extra_body={

"enable_thinking": True,

# "preserve_thinking": True,

},

stream=True

)

reasoning_content = "" # Full reasoning trace

answer_content = "" # Full response

is_answering = False # Whether we have entered the answer phase

print("\n" + "=" * 20 + "Reasoning" + "=" * 20 + "\n")

for chunk in completion:

if not chunk.choices:

print("\nUsage:")

print(chunk.usage)

continue

delta = chunk.choices[0].delta

# Collect reasoning content only

if hasattr(delta, "reasoning_content") and delta.reasoning_content is not None:

if not is_answering:

print(delta.reasoning_content, end="", flush=True)

reasoning_content += delta.reasoning_content

# Received content, start answer phase

if hasattr(delta, "content") and delta.content:

if not is_answering:

print("\n" + "=" * 20 + "Answer" + "=" * 20 + "\n")

is_answering = True

print(delta.content, end="", flush=True)

answer_content += delta.content

更多信息请访问 API 文档。

代码及智能体

Qwen3.6-35B-A3B 具备出色的智能体编程能力,可以无缝集成到流行的第三方编程助手中,包括 OpenClaw、Claude Code 和 Qwen Code。

OpenClaw

Qwen3.6-35B-A3B 兼容 OpenClaw(原名 Moltbot / Clawdbot),这是一款可自托管的开源 AI 编码智能体。将其连接至 百炼,即可在终端中获得完整的智能体编码体验。请使用以下脚本开始:

# Node.js 22+

curl -fsSL https://molt.bot/install.sh | bash # macOS / Linux

# Set your API key

export DASHSCOPE_API_KEY=<your_api_key>

# Launch OpenClaw

openclaw dashboard # web browser

# openclaw tui # Open a new terminal and start the TUI

首次使用时,请编辑 ~/.openclaw/openclaw.json 文件,将 OpenClaw 指向百炼。

找到或创建以下字段并合并它们——切勿覆盖整个文件,以保留您现有的设置:

{

"models": {

"mode": "merge",

"providers": {

"bailian": {

"baseUrl": "https://dashscope.aliyuncs.com/compatible-mode/v1",

"apiKey": "DASHSCOPE_API_KEY",

"api": "openai-completions",

"models": [{

"id": "qwen3.6-flash",

"name": "qwen3.6-flash",

"reasoning": true,

"input": ["text", "image"],

"contextWindow": 131072,

"maxTokens": 16384

}]

}

}

},

"agents": {

"defaults": {

"model": {

"primary": "bailian/qwen3.6-flash"

},

"models": {

"bailian/qwen3.6-flash": {}

}

}

}

}

Qwen Code

Qwen3.6-35B-A3B 适配 Qwen Code,这是一款专为终端设计的开源 AI 智能体,针对 Qwen 系列进行了深度优化。请使用以下脚本开始:

# Node.js 20+

npm install -g @qwen-code/qwen-code@latest

# Start Qwen Code (interactive)

qwen

# Then, in the session:

/help

/auth

首次使用时,系统会提示您登录。您可以随时运行 /auth 来切换认证方式。

Claude Code

Qwen API 也支持 Anthropic API 协议,这意味着您可以将其与 Claude Code 等工具配合使用,以获得更优质的编码体验:

# Install Claude Code

npm install -g @anthropic-ai/claude-code

# Configure environment

export ANTHROPIC_MODEL="qwen3.6-flash"

export ANTHROPIC_SMALL_FAST_MODEL="qwen3.6-flash"

export ANTHROPIC_BASE_URL=https://dashscope.aliyuncs.com/apps/anthropic

export ANTHROPIC_AUTH_TOKEN=<your_api_key>

# Launch the CLI

claude

总结

Qwen3.6-35B-A3B 表明,稀疏 MoE 模型可以实现卓越的智能体编程和推理能力。仅凭30亿激活参数,它便能够交付与数倍于其激活规模的稠密模型相当的性能,同时在多模态基准上同样表现出色。作为完全开源的模型权重,它为该规模下的模型能力树立了新的标杆。

展望未来,我们将继续扩展 Qwen3.6 开源家族,并不断拓展高效开源模型所能实现的边界。我们由衷感谢社区的宝贵反馈,并期待看到大家利用 Qwen3.6-35B-A3B 创造出的精彩成果。Qwen3.6 开源家族正在持续壮大,敬请关注我们的后续发布!